Real Time in-vivo 3D imaging

Real Time in-vivo 3D imaging

Why High-Speed Imaging Systems Break Down in Real-Time 3D Biomedical Imaging

Rethinking Image Reconstruction, System Architecture, and Engineering Complexity in In-Vivo Microscopy

1. When Speed Is No Longer the Limitation

In many advanced biomedical imaging labs today, increasing speed is no longer the primary challenge.

- Scanning can be faster.

- Detectors can be more sensitive.

- Acquisition hardware can be upgraded.

And yet, when systems are pushed toward real-time 3D imaging - especially in in vivo and dynamic biological conditions - they often begin to behave unpredictably.

Frame rates become inconsistent. Image quality degrades. Parameters that once worked require constant retuning.

This is not simply a matter of system

complexity. It signals a deeper structural limitation.

2. From Image Capture to Image Reconstruction

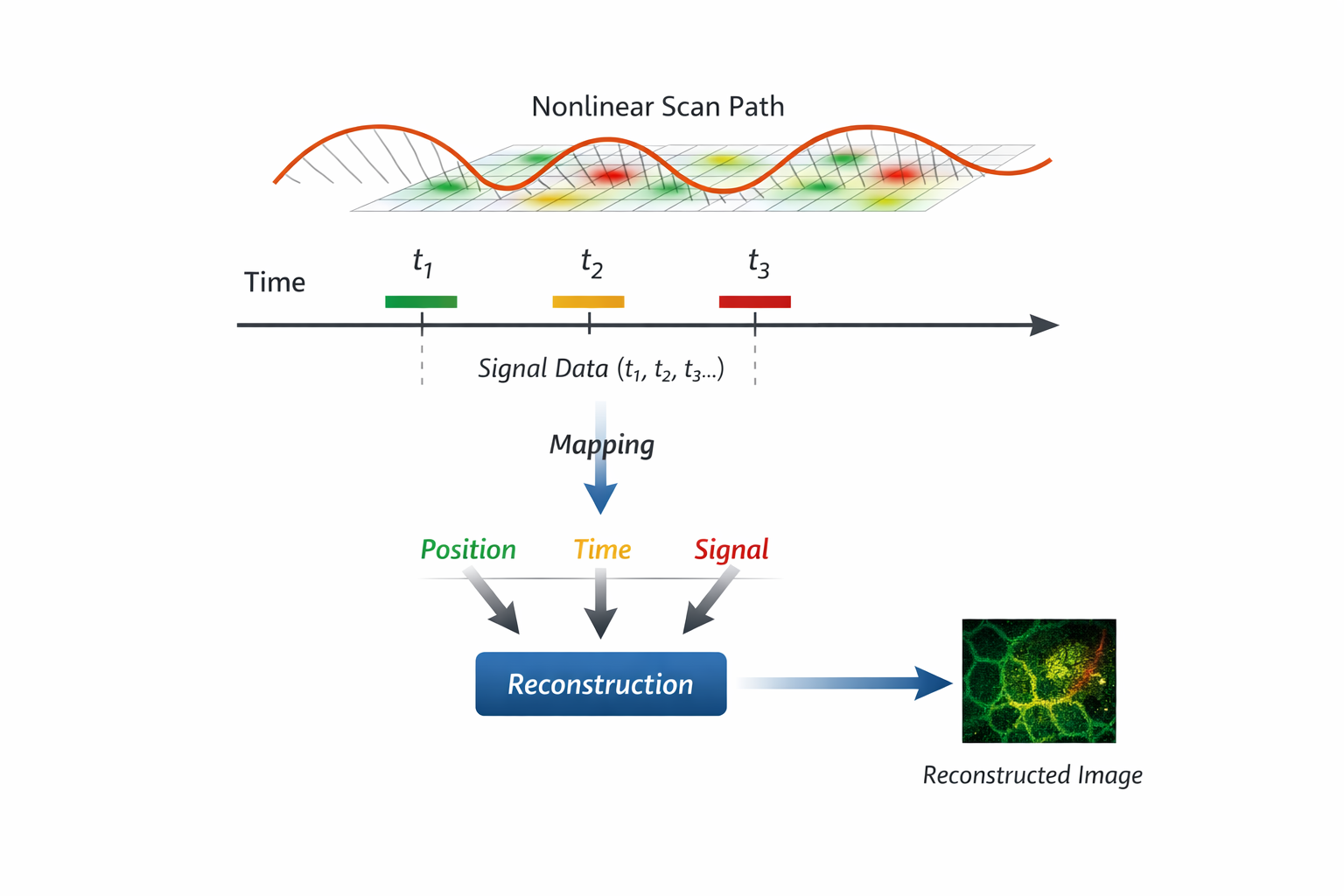

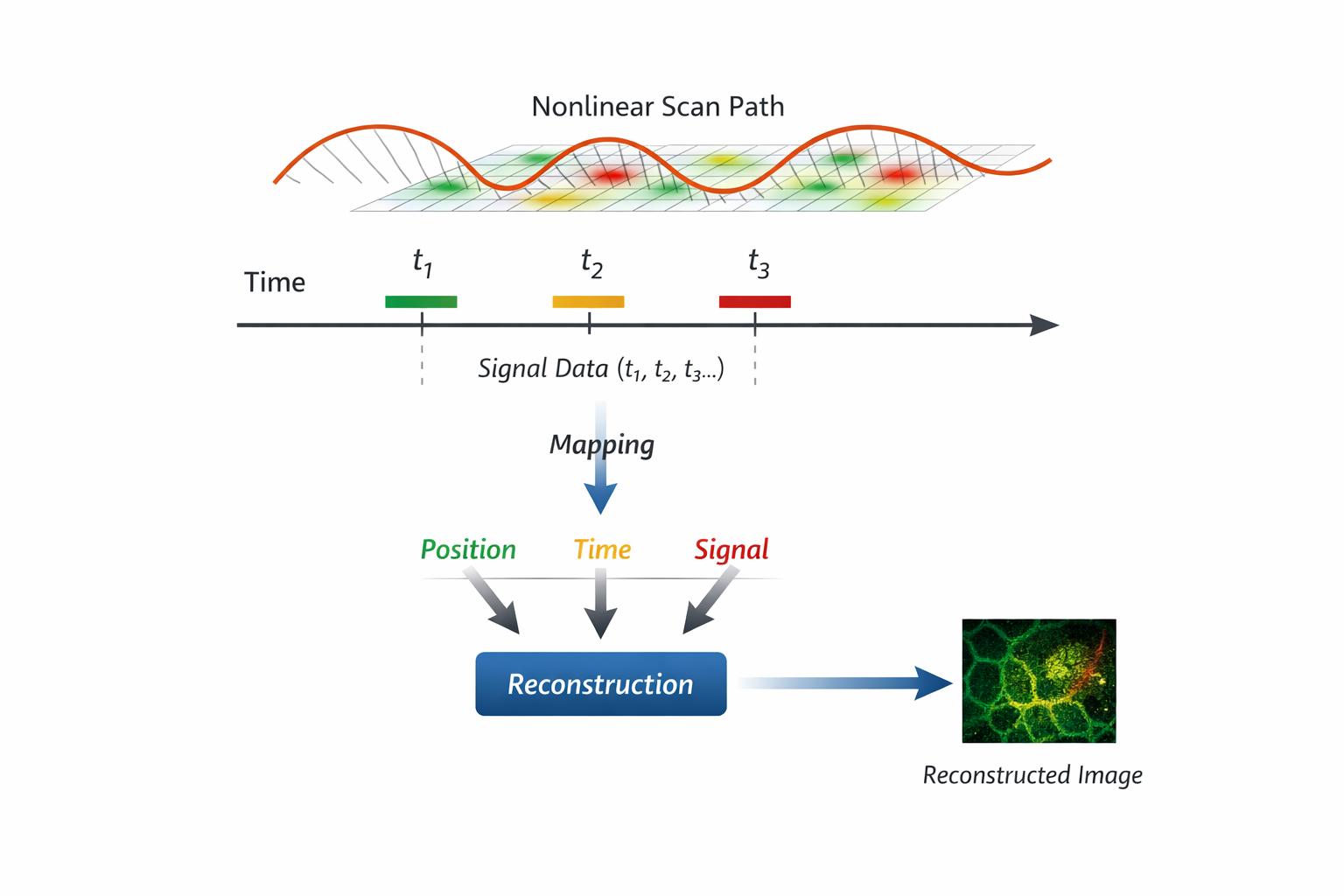

In laser scanning microscopy, images are not directly captured - they are reconstructed.

A signal is measured over time as the excitation beam moves across the sample. To form an image, the system must map:

- where the beam was

- when the signal was acquired

- how these correspond to spatial coordinates

Under high-speed conditions - especially with non-linear scanning trajectories such as resonant scanning—this mapping becomes increasingly complex.

Spatial sampling is no longer uniform. The relationship between time and position continuously changes.

As a result: Image quality depends not only on optical resolution, but on how accurately this time–space–signal relationship is maintained.

In this sense, images are not simply captured - they are reconstructed, and only as accurate as the system’s ability to preserve this relationship.

3. A Mismatch Between System Behavior and System Architecture

At high frame rates and in real-time 3D operation, imaging systems behave as tightly coupled systems.

Scanning motion, signal acquisition, and reconstruction are no longer separable steps. They must remain continuously aligned.

However, most systems—especially those built from conventional components—are based on:

- distributed hardware modules

- independent timing sources

- software-mediated synchronization

- PC- and CPU-centered data processing

These architectures are not designed to maintain strict timing relationships across the entire system. They are non-deterministic by nature.

4. When Systems Stop Scaling

As more capabilities are added - higher speed, more modalities, more parameters—the system does not simply become more powerful.

It becomes more fragile.

Small mismatches in timing or sampling begin to affect reconstruction. Manual tuning becomes necessary to maintain performance. System behavior becomes less predictable.

At some point, adding more components no longer improves the system.

It only increases the effort required to keep it working.

5. A Shift in How Research Systems Are Built

For many years, building custom systems in research labs followed an ad hoc model. When problems arose, they were addressed incrementally: adding components, adjusting parameters, refining control loops.

This approach was effective.

It enabled flexibility, rapid iteration, and hands-on system understanding.

But under current demands - real-time 3D imaging, high-speed operation, and increasingly complex biological conditions - this model is reaching its limits.

The effort required to maintain the system increasingly competes with the effort required to conduct the science itself.

6. Beyond Hardware: The Software and System Engineering Barrier

The challenge is no longer confined to hardware. Modern imaging systems require tightly integrated workflows, where:

- user interfaces interact with hardware in real time

- timing control directly affects data acquisition

- data flow determines whether reconstruction is possible

These are no longer linear pipelines. They are coupled, time-sensitive systems.

At the same time, the scale and speed of data exceed what traditional PC- and CPU-centered architectures can reliably support.

- Latency becomes unpredictable.

- Data paths become too complex.

- Timing relationships become difficult to maintain.

Even when FPGA-based approaches are introduced, the problem does not disappear—it transforms.

It now requires:

- firmware-level control

- timing-aware data pipeline design

- deep integration between acquisition and reconstruction

This represents a fundamental shift in the level of system engineering required.

7. A Growing Gap in Practice

This leads to a growing tension in modern biomedical imaging environments:

- Between flexibility and stability.

- Between system control and usability.

- Between engineering effort and scientific focus.

▹Commercial systems, developed by highly specialized teams, offer stability and performance - but are often closed and resource-intensive.

▹Custom-built systems offer flexibility - but place increasing demands on engineering expertise.

As system complexity grows, neither approach fully resolves the challenge.

This raises a practical question for many research teams:

▹not just how to improve their systems,

▹but whether the current approach to building and maintaining them is still sustainable.

8. Rethinking the System as a Whole

We may be reaching a point where the central question is no longer:

▹“How do we improve individual components?” But:

▹“How do we design systems that can maintain coherence across timing, data, and control - by design?”

This requires a shift from assembling tools to architecting systems.

9. Toward a Different Approach

This is the direction we have been exploring.

▹Not simply to improve performance,

▹but to enable imaging systems that can be built, maintained, and evolved within real research environments.

Systems that reduce the engineering burden, while preserving the flexibility required for scientific discovery.

◈ If this reflects challenges you are currently facing in your imaging systems, we would be glad to exchange perspectives.

Why High-Speed Imaging Systems Break Down in Real-Time 3D Biomedical Imaging

Rethinking Image Reconstruction, System Architecture, and Engineering Complexity in In-Vivo Microscopy

1. When Speed Is No Longer the Limitation

In many advanced biomedical imaging labs today, increasing speed is no longer the primary challenge.

- Scanning can be faster.

- Detectors can be more sensitive.

- Acquisition hardware can be upgraded.

And yet, when systems are pushed toward real-time 3D imaging - especially in in vivo and dynamic biological conditions - they often begin to behave unpredictably.

Frame rates become inconsistent. Image quality degrades. Parameters that once worked require constant retuning.

This is not simply a matter of system

complexity. It signals a deeper structural limitation.

2. From Image Capture to Image Reconstruction

In laser scanning microscopy, images are not directly captured - they are reconstructed.

A signal is measured over time as the excitation beam moves across the sample. To form an image, the system must map:

- where the beam was

- when the signal was acquired

- how these correspond to spatial coordinates

Under high-speed conditions - especially with non-linear scanning trajectories such as resonant scanning—this mapping becomes increasingly complex.

Spatial sampling is no longer uniform. The relationship between time and position continuously changes.

As a result: Image quality depends not only on optical resolution, but on how accurately this time–space–signal relationship is maintained.

In this sense, images are not simply captured - they are reconstructed, and only as accurate as the system’s ability to preserve this relationship.

3. A Mismatch Between System Behavior and System Architecture

At high frame rates and in real-time 3D operation, imaging systems behave as tightly coupled systems.

Scanning motion, signal acquisition, and reconstruction are no longer separable steps. They must remain continuously aligned.

However, most systems—especially those built from conventional components—are based on:

- distributed hardware modules

- independent timing sources

- software-mediated synchronization

- PC- and CPU-centered data processing

These architectures are not designed to maintain strict timing relationships across the entire system. They are non-deterministic by nature.

4. When Systems Stop Scaling

As more capabilities are added - higher speed, more modalities, more parameters—the system does not simply become more powerful.

It becomes more fragile.

Small mismatches in timing or sampling begin to affect reconstruction. Manual tuning becomes necessary to maintain performance. System behavior becomes less predictable.

At some point, adding more components no longer improves the system.

It only increases the effort required to keep it working.

5. A Shift in How Research Systems Are Built

For many years, building custom systems in research labs followed an ad hoc model. When problems arose, they were addressed incrementally: adding components, adjusting parameters, refining control loops.

This approach was effective.

It enabled flexibility, rapid iteration, and hands-on system understanding.

But under current demands - real-time 3D imaging, high-speed operation, and increasingly complex biological conditions - this model is reaching its limits.

The effort required to maintain the system increasingly competes with the effort required to conduct the science itself.

6. Beyond Hardware: The Software and System Engineering Barrier

The challenge is no longer confined to hardware. Modern imaging systems require tightly integrated workflows, where:

- user interfaces interact with hardware in real time

- timing control directly affects data acquisition

- data flow determines whether reconstruction is possible

These are no longer linear pipelines. They are coupled, time-sensitive systems.

At the same time, the scale and speed of data exceed what traditional PC- and CPU-centered architectures can reliably support.

• Latency becomes unpredictable.

• Data paths become too complex.

• Timing relationships become difficult to maintain.

Even when FPGA-based approaches are introduced, the problem does not disappear—it transforms.

It now requires:

- firmware-level control

- timing-aware data pipeline design

- deep integration between acquisition and reconstruction

This represents a fundamental shift in the level of system engineering required.

7. A Growing Gap in Practice

This leads to a growing tension in modern biomedical imaging environments:

- Between flexibility and stability.

- Between system control and usability.

- Between engineering effort and scientific focus.

▹Commercial systems, developed by highly specialized teams, offer stability and performance - but are often closed and resource-intensive.

▹Custom-built systems offer flexibility - but place increasing demands on engineering expertise.

As system complexity grows, neither approach fully resolves the challenge.

This raises a practical question for many research teams:

▹not just how to improve their systems,

▹but whether the current approach to building and maintaining them is still sustainable.

8. Rethinking the System as a Whole

We may be reaching a point where the central question is no longer:

▹“How do we improve individual components?” But:

▹“How do we design systems that can maintain coherence across timing, data, and control - by design?”

This requires a shift from assembling tools to architecting systems.

9. Toward a Different Approach

This is the direction we have been exploring.

▹Not simply to improve performance,

▹but to enable imaging systems that can be built, maintained, and evolved within real research environments.

Systems that reduce the engineering burden, while preserving the flexibility required for scientific discovery.

◈ If this reflects challenges you are currently facing in your imaging systems, we would be glad to exchange perspectives.